Read-only by default

Why Skyportal

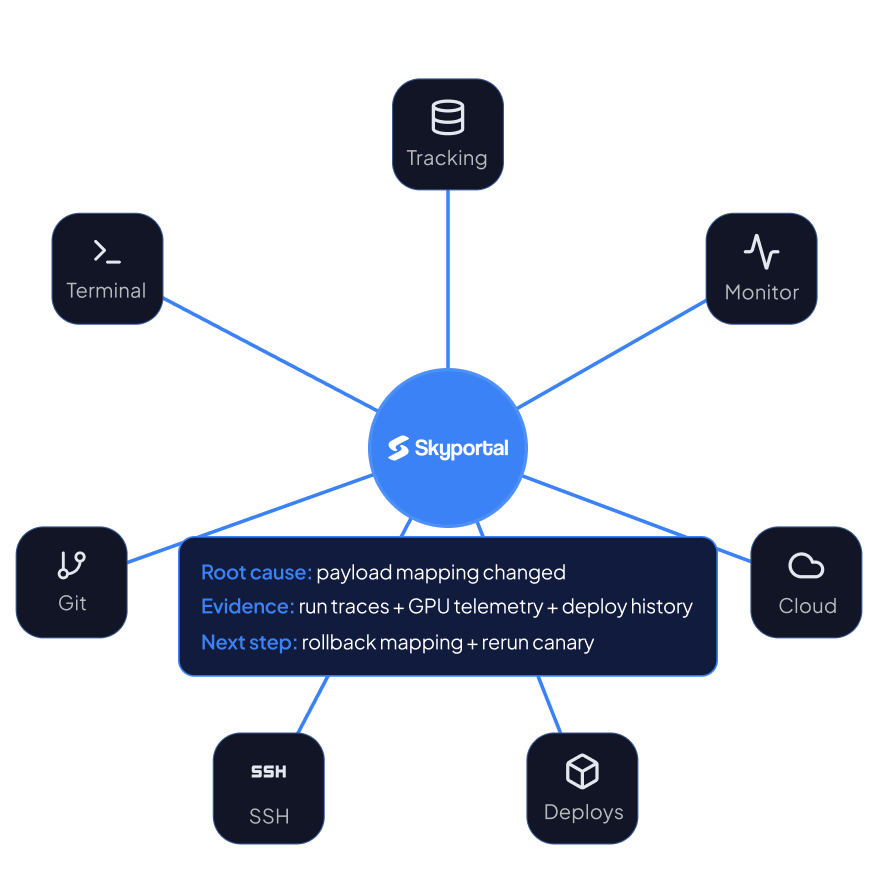

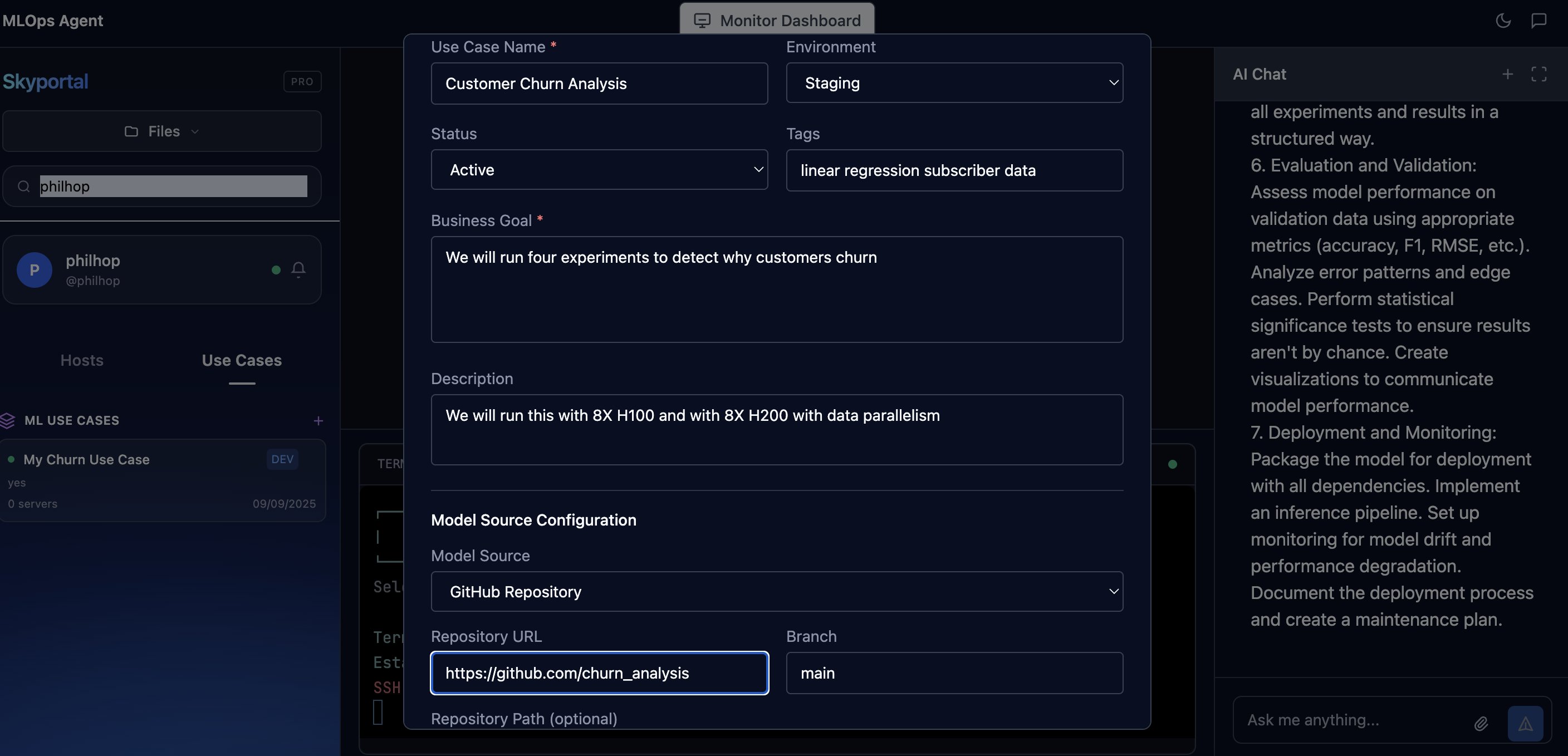

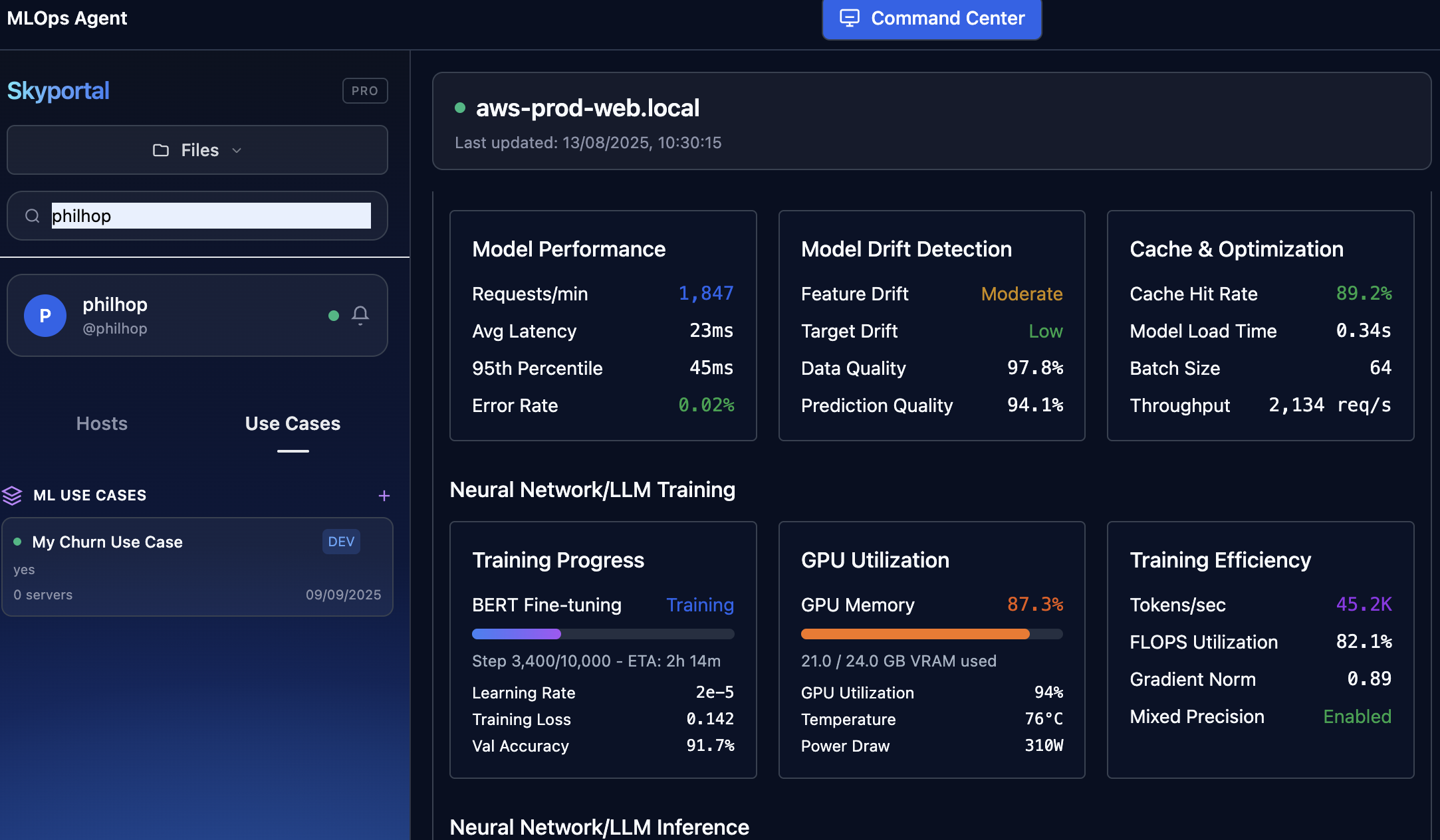

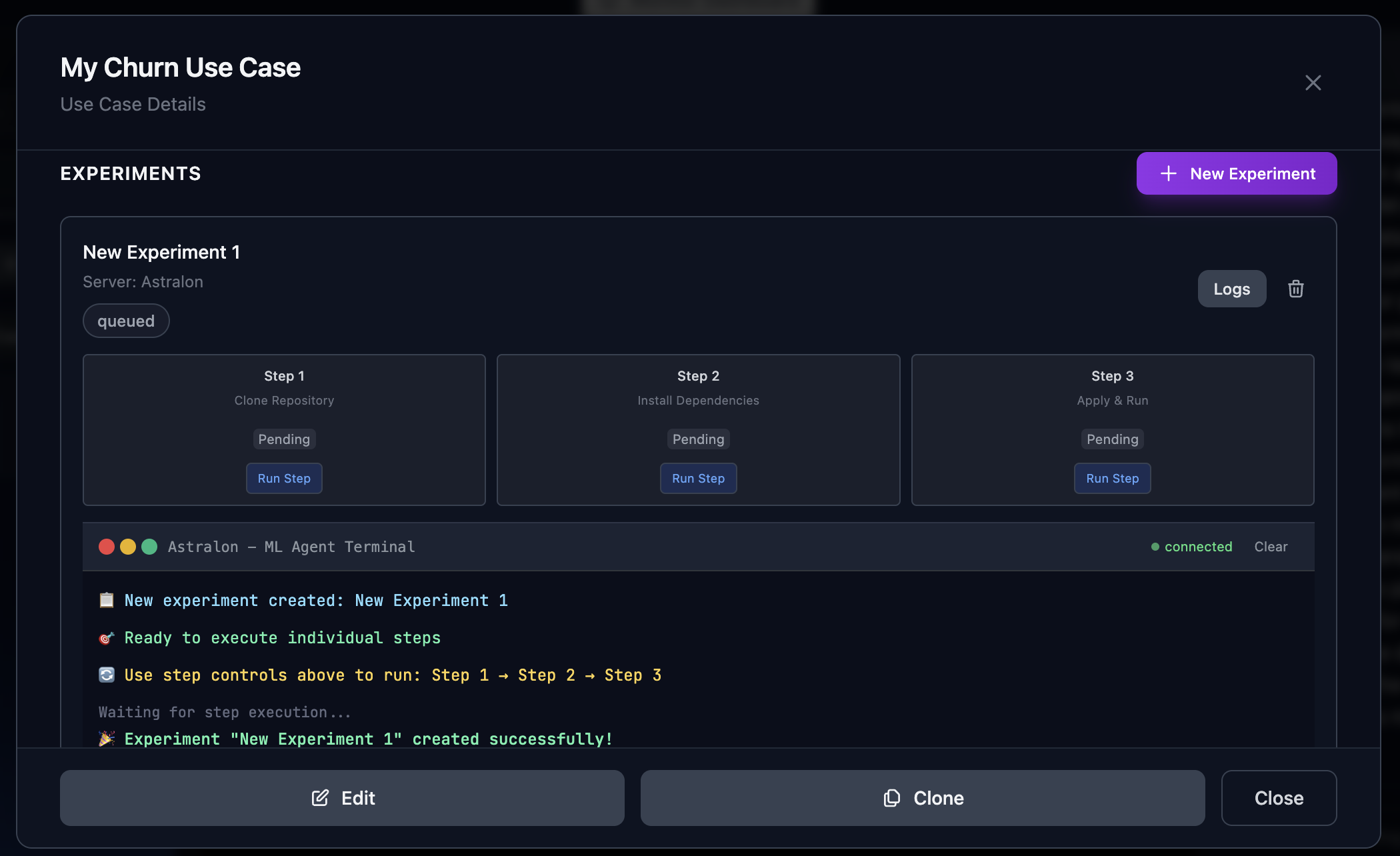

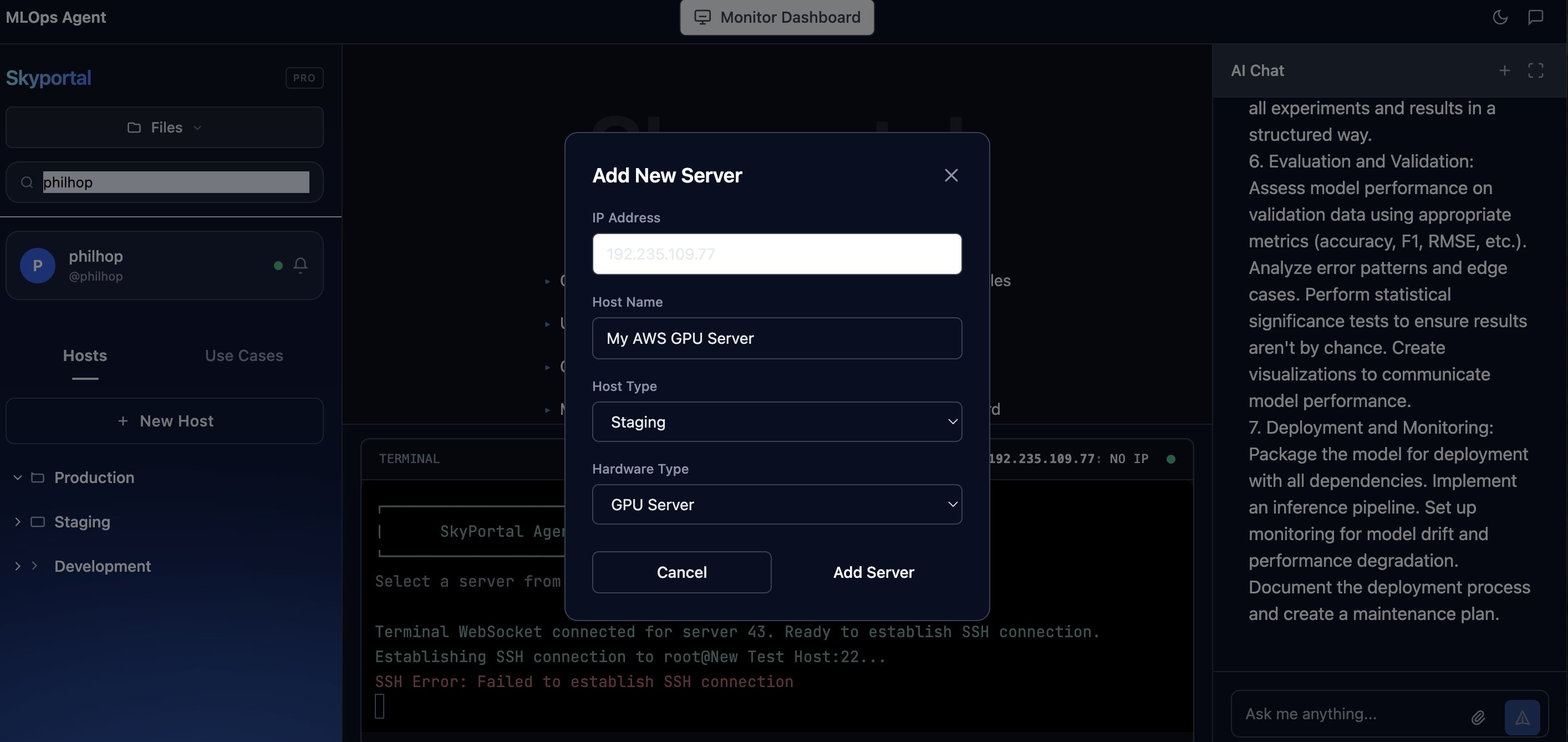

Most teams already have copilots inside individual tools. Skyportal gives SARA one MLOps context layer across fleet, environments, code, runs, and monitoring.

The problem with current MLOps tools

Copilots are everywhere. The answers are still scattered.

Most MLOps pain is operational: SSH sprawl, environment drift, broken dependencies, driver conflicts, inconsistent deployments, and missing visibility.

The slow part is not compute. It is coordination.

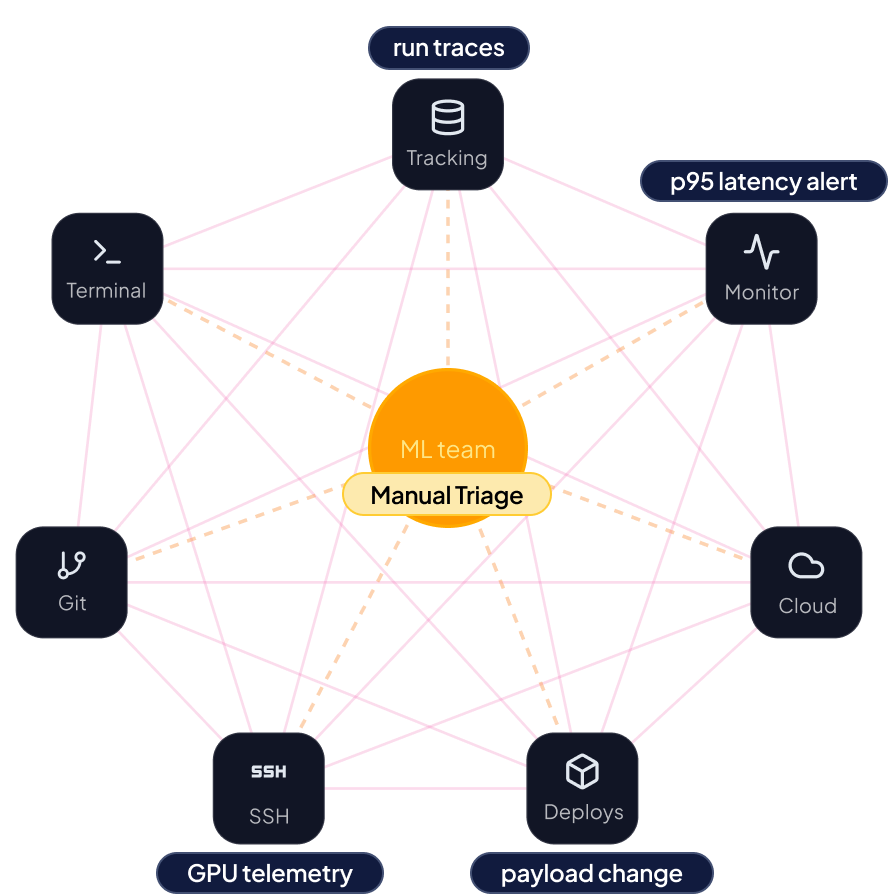

Latency is up, drift is rising, and GPU utilization dropped on one production inference path. The team checks monitoring, run traces, deploy history, and GPU telemetry separately to find the cause.